Bridging the Digital Gap: AI Sign Language Interpretation Solution

China

Accessibility

Implementing Organisation

Baidu

China, Beijing

Implementing Point of Contact

Tianmin Long

Contact Person

Contributor of the Impact Story

International Telecommunication Union (ITU)

Year of implementation

2022

Problem statement

Over 27.8 million deaf and hard-of-hearing (DHH) individuals in China require sign language interpretation services, yet fewer than 500 certified sign language interpreters existed in 2023, representing a massive talent gap. Sign language differs fundamentally from spoken Chinese in grammar, structure, and expression, requiring key information extraction rather than direct translation. As a low-resource language, it lacks sufficient data for AI model training. Natural sign language relies on hand gestures, mouth movements, and body language, demanding complex multi-channel synthesis.

Submission Overview

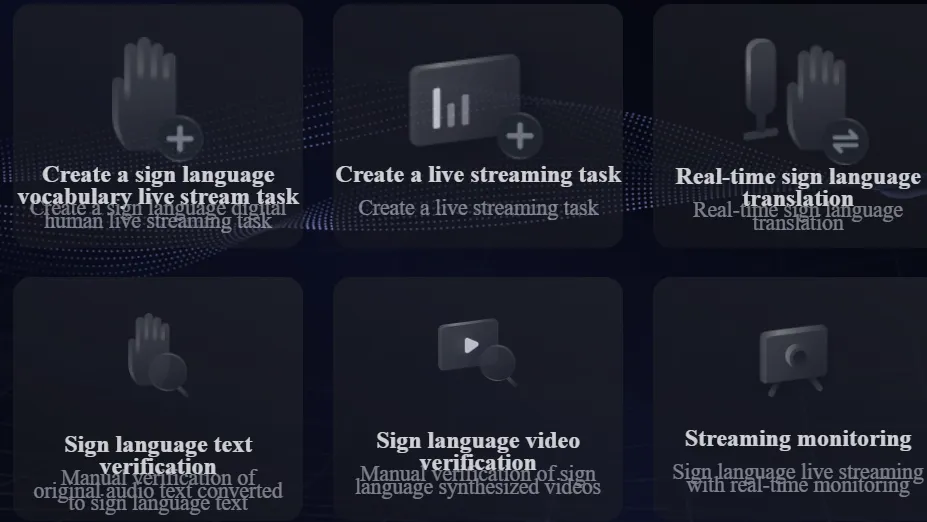

Baidu is a leading Chinese technology company with deep expertise in AI. Its Baidu AI Cloud XiLing digital avatar platform develops solutions for sign language interpretation, leveraging nearly 10 million natural sign language samples for model training, along with proprietary streaming attention models and end-to-end speech recognition achieving more than 15% improvement in cloud-based recognition accuracy.

AI Technology Used

Key Outcomes

Access & Reach

Baidu's XiLing AI Sign Language Platform addresses the critical accessibility gap for over 27.8 million deaf and hard-of-hearing (DHH) individuals in China, where fewer than 500 certified sign language interpreters existed in 2023. The platform provides bidirectional translation between sign language and text/speech/video using digital avatars, achieving over 98% speech recognition accuracy and 98.5% mouth-shape generation accuracy. During the 2022 Winter Olympics, the AI sign language anchors provided 24/7 live broadcasting, garnering over 100 million views.

Impact Metrics

Speech Recognition Accuracy on the AI Platform

Baseline Value

NA

Post-Implementation

Over 98% near-field speech recognition rate on mobile phones

Mouth Shape Generation Accuracy on the AI Platform

Baseline Value

NA

Post-Implementation

98.5 % accuracy of mouth shape generation was recorded for the AI sign language virtual interpreter solution on the platform

Scale of sign language data corpus used for training the Platform

Baseline Value

NA Number of samples

Post-Implementation

Nearly 10 million natural sign language samples collected for training the AI Platform Number of samples

Implementation Context

Deployed in China, across government service counters, hospitals, banks, airports, transportation hubs, educational institutions, and boradcast media, including the 2022 Winter Olympics.

27.8 million deaf and hard-of-hearing individuals in China as well as the general public via accessible public services

Key Partnerships

Tianjin University of Technology School for the Deaf

Replicability & Adaptation

- Expand training datasets to include regional sign language variations and dialects

- Adapt for different national sign languages beyond Chinese Sign Language

- Implement federated learning for privacy during on-device model updates

- Develop lightweight transformer models for diverse hardware platforms

- Partner with local deaf communities and special education experts

The plug-and-play All-in-One Machine enables rapid deployment across diverse settings. Supports online platform deployment within hours or offline plug-and-play, plus integration into TVs, apps, websites, and WeChat mini-programs. Proven at scale during the 2022 Winter Olympics.

Supporting Materials

* The data presented is self-reported by the respective organisations. Readers should consult the original sources for further details.