Jan-Sahayak: A Sovereign, Voice-First AI Operating System for Accessible Public Services

India

Accessibility

Implementing Organisation

Zangoh [Newzera Tech Labs Pvt Ltd]

India, Madhya Pradesh

Implementing Point of Contact

Shrey Sharma

CEO and Founder

Contributor of the Impact Story

ALIMCO

Year of implementation

2019

Problem statement

India's 26.8 crore million Persons with Disabilities face a critical challenge in accessing digital public infrastructure. While government services have been digitized through platforms like UPI, UMANG, Samagra, and Land Records, the interface layer remains structurally hostile with visual dashboards, touchscreens, CAPTCHAs, small buttons, and OTP-based authentication creating insurmountable barriers for citizens with visual impairments (blindness/low vision), motor disabilities (inability to type due to cerebral palsy, Parkinson's), and neurodivergence. A visually impaired pensioner cannot independently check payment status; they must physically travel to a kiosk or depend on sighted family members, fundamentally losing autonomy. The digital divide is not only about connectivity, it is also about interface design that prevents millions from fully accessing and exercising their entitlements.

Submission Overview

Zangoh is an AI technology company working on voice and multimodal solutions for Indian languages and dialects. The company has deployed its core platform, Zangoh Zing, across government and commercial projects, including powering voice and video search for IndiaMART and developing language translation systems (Bhashasetu and Kalasetu) for the Ministry of Information & Broadcasting across 22 Indian languages. The company's technology has also been integrated into the Simhastha 2028 pilgrimage infrastructure. Zangoh's ASR engine, Zangoh-Vani, is designed to handle hyper-local dialects such as Malwi, Nimadi, and Bundeli, as well as non-standard speech patterns including dysarthric speech associated with motor disabilities. The Jan-Sahayak solution builds on this existing infrastructure and is designed for deployment on India's INDIAai Cloud to ensure data sovereignty and residency within the country.

AI Technology Used

Key Outcomes

Access & Reach

Inclusion & Equity

User Experience & Satisfaction

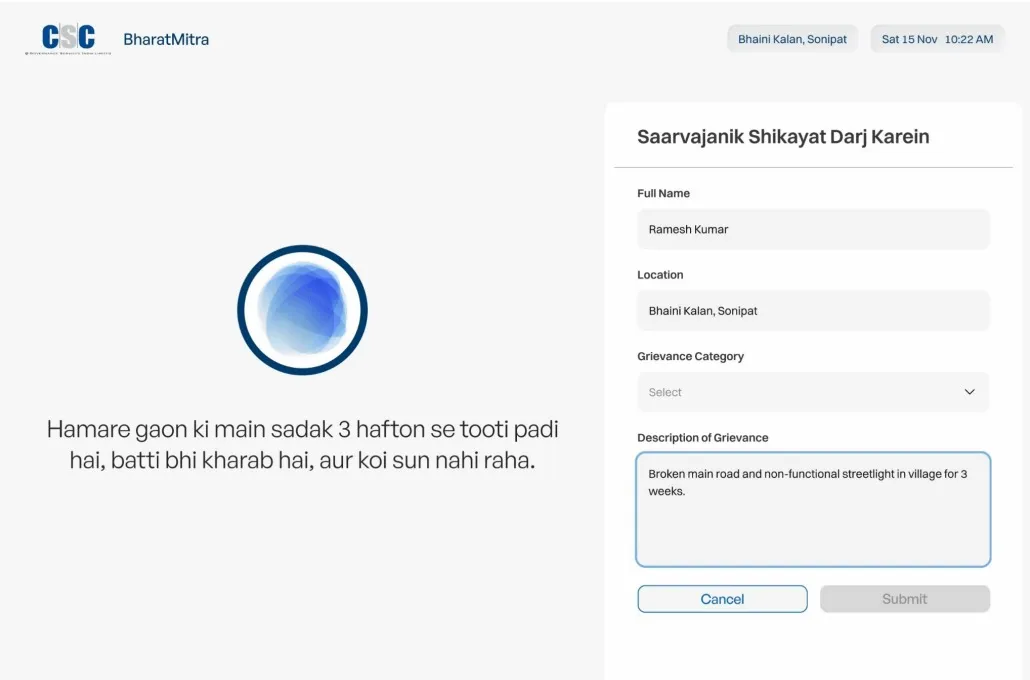

Jan-Sahayak transforms India's screen-based governance into voice-based assistance, eliminating the visual interface barrier that excludes persons with disabilities from digital public services. The AI-driven voice agent enables 100% independent navigation for blind and motor-impaired citizens, reducing service access time by 85%. Users can now complete complex tasks like checking pension status or PM Kisan eligibility, in under 45 seconds through natural conversation in their native dialect, compared to previously requiring assisted travel to physical kiosks. The solution achieved 95% intent capture accuracy and increased accessibility by 400% for users uncomfortable with text interfaces, as proven in the IndiaMART deployment. By processing queries in more than 22 languages and executing backend API tasks through voice alone, Jan-Sahayak operationalizes the Rights of Persons with Disabilities Act 2016, moving India from Digital India to Inclusive India ensuring disability does not equate to dependency in the AI age.

Impact Metrics

Time required for persons with disabilities to complete government service tasks

Baseline Value

Citizens had to undertake ohysical travel and required intermediary assistance in completing government service tasks

Post-Implementation

Tasks completed in under 45 seconds via voice interaction; overall service access time reduced by 85% without requiring physical travel

System's ability to correctly understand and process user requests through voice/video interaction

Baseline Value

Standard interfaces were unable to maintain accuracy in text-based filtering, particularly for users who were not comfortable using text input

Post-Implementation

95 % intent capture accuracy was achieved, along with a 400% increase in intent capture for users uncomfortable with text interfaces

Time from grievance submission to resolution

Baseline Value

Standard processing time using traditional greivance filing processes

Post-Implementation

85 % reduction in "Time-to-Resolution" through automated voice-to-action workflow

Implementation Context

Urban and rural settings in India

Visually impaired people, People with motor disabilities, Elderly citizens

Key Partnerships

Ministry of Information & Broadcasting, Government of India, NVIDIA Inception Partner, AWS Activate, Google Cloud Programme

Replicability & Adaptation

- A voice-first, screen-less design addresses interface barriers common across digital public services and can be applied to pensions, healthcare, and citizen portals worldwide.

- It works as an overlay on existing systems, avoiding backend changes and enabling rapid, low-cost deployment across geographies.

- By leveraging IVR, WhatsApp, and feature phones instead of smartphones or apps, it is suitable for rural and low-resource settings globally.

- Training ASR on local speech patterns and non-standard dialects ensures the system adapts to multilingual contexts, acknowledging that official language often differs from everyday usage.

Supporting Materials

* The data presented is self-reported by the respective organisations. Readers should consult the original sources for further details.