Jhana.ai platform

India

Justice and Governance

Implementing Organisation

Jhana.ai

India, Karnataka, Bengaluru

Implementing Point of Contact

Smita Gupta

Director, GovTech and Public Sector

Contributor of the Impact Story

Carnegie India

Year of implementation

2024

Problem statement

Legal risk accumulates silently across everyday life events such as housing, marriage, inheritance, taxation, and disputes, yet citizens lack any framework for legal wellness. Interaction with the Indian legal system is often opaque, slow, and expert-dependent, with high information asymmetry and limited accountability. Judicial institutions face a different but related challenge: massive scale, fragmented records, frequent roster changes, and loss of institutional memory. Courts process tens of thousands of pages weekly, yet judges and registries remain constrained by cognitive bandwidth rather than intent. Filing scrutiny, case preparation, research, dictation, and publishing remain manual and repetitive, leading to delays, errors, and inconsistent access to justice. Existing digitization efforts often replicate paperwork digitally rather than reducing effort or improving substance. The absence of AI-native legal infrastructure prevents courts from transforming raw filings into structured, searchable, and auditable institutional memory. Without such systems, both citizens and the judiciary continue to bear avoidable legal risk, administrative burden, and delays in justice delivery.

Submission Overview

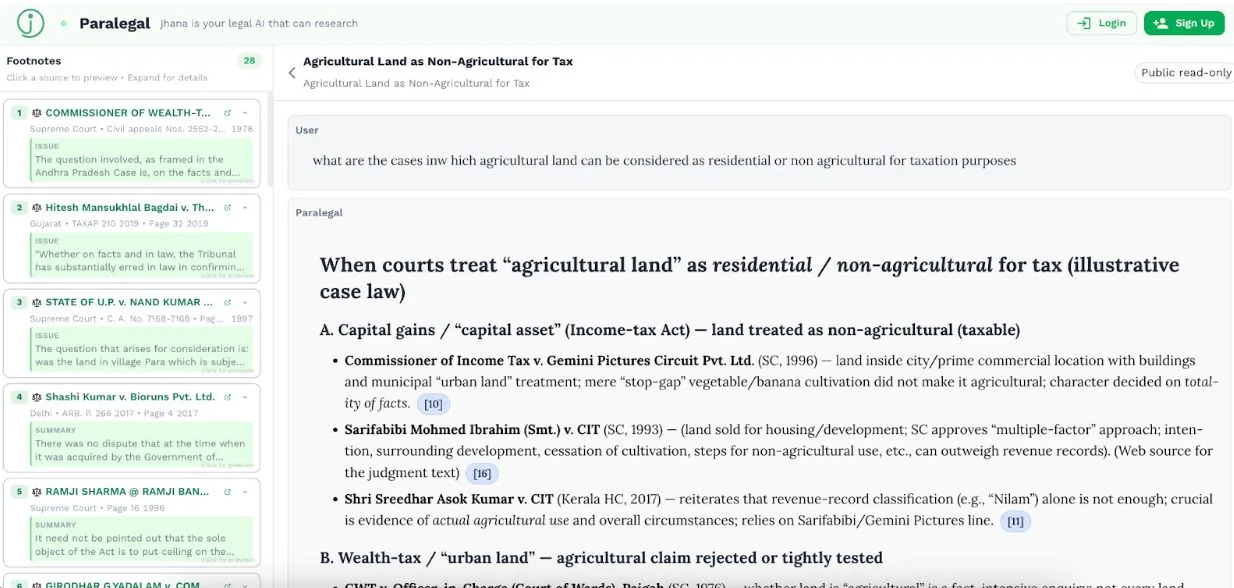

Jhana is India’s leading legal AI lab, founded in 2022 at Harvard University, $1.7M seed-funded, and serving over 15,000 monthly active lawyers, judges, and principals. Courtroom by jhana is our pioneering judiciary practice that automates route work across the case lifecycle and brings powerful decision support tools for judges and listing registrars. AI handles filing scrutiny, metadata extraction, docket prep, and more, eliminating critical back office delays between filing, numbering, listing, and disposal. Courtroom is active in pilot or at scale at 5 courts including most notably the High Courts of Madras and Karnataka. PUBSEC by jhana is our public sector practice, built atop our operational fluency, understanding of sensitive government systems and discordant staff incentives, and expertise in change management. PUBSEC and Courtroom are designed as self-owned, composable API blocks where the state retains its data sovereignty while benefitting from online inference on jhana. Our APIs power or talk to a variety of MIS and DMS systems, and we have developed proficiency in the patching and scripting work that it can now rapidly take a legacy government system live.. jhana’s AI tools for researching case law and procedure have over 150 judges and 200 government staff among voluntary users who signed up and even subscribed on their own accord. Our proprietary National Legal Archive of over 16 million Indian legal documents is updated daily and structured to engineer tools that come with verifiable citations. jhana follows a strict responsible-AI framework emphasizing human-in-the-loop validation, no outcome prediction, court-owned data, and rigorous footnotes for explainable audit trails. Backed by leading technology investors and recognized across Asia for legal innovation, jhana combines deep legal expertise, advanced AI research, and on-ground operational fluency to improve access to justice, judicial efficiency, and legal certainty at population scale.

AI Technology Used

Generative AI

Key Outcomes

Efficiency & Productivity

Access & Reach

Accuracy & Quality Improvement

User Experience & Satisfaction

Knowledge & Skills Impact

Access to justice in India is often delayed due to fragmented records, and because filings, case indexing, and documentation consume significant time. Jhana.ai's legal infrastructure tools have compressed filing scrutiny from hours to minutes and indexing from days to a few minutes. The platform processes thousands of pages weekly for judicial note-taking and has been organically adopted by over 150 judges, which has led to improvements in workflow efficiencies and faster case resolution.

Impact Metrics

Average time taken to complete filing scrutiny checks on commercial e-filings

Baseline Value

1.5 hours was the turnaround time before the AI intervention

Post-Implementation

The turnaround time to complete filings came down to 3 minutes Minutes/ hours

Turn-around time from e-filing to indexing/processing completion

Baseline Value

2 -10 days turnaround time

Post-Implementation

Less than 15 minutes turnaround time Minutes

Time taken to convert published cause-list into processed, populated judge dashboards

Baseline Value

1 -10 days for conversion

Post-Implementation

Less than 15 minutes for conversion Minutes/ days

Volume of pages processed per week for judicial note-taking and preparation

Baseline Value

Workload used to be fragmented due to manual reading Pages per week

Post-Implementation

Over 10,000 pages per week processed Pages per week

Number of verified judge users adopting AI dictation tool organically.

Baseline Value

NA verified judge users

Post-Implementation

Over 150 verified judge users Number of users Reported Peiord - End: 26/11/2025

Net Promoter Score / satisfaction rating from pilot evaluation team benchmarking headnotes

Baseline Value

NA Score (out of 5) / NPS Percentage

Post-Implementation

100 % NPS (5 out of 5)

Number of verified legal professionals actively using AI legal research and drafting tool

Baseline Value

NA verified legal professionals

Post-Implementation

10 ,535 verified judge users

Implementation Context

Supreme Court of India, High Courts of Karnataka, Madras (Tamil Nadu), Telangana, Kerala, ITAT, district courts, arbitration bodies

Judges, registrars, court staff, government lawyers, arbitrators, mediators, lawyers and indirectly litigants and citizens across urban and semi-urban India, including underserved court users facing delays and information barriers

Key Partnerships

Government and Judicial Partnerships

Replicability & Adaptation

- The use case demonstrates strong replication potential across Indian states and comparable common-law jurisdictions, provided procedural, linguistic, and institutional adaptations are undertaken

- The core AI architecture is court-agnostic and composable, but judicial systems are rule-dense, locally governed, and operationally idiosyncratic, which necessitates structured adaptation rather than plug-and-play deployment

- Replication is most feasible in environments where Courts already use CIS/DMS or e-filing systems (even if fragmented)

- There is institutional willingness to pilot AI under controlled governance (LoI/ MoU / MLE)

- Registries and judges face scale constraints similar to Indian High Courts (volume of filings, multilingual records, frequent roster changes)

- The platform has already demonstrated transferability across multiple High Courts, tribunals, and ADR/ODR institutions, each with distinct procedural rules, validation standards, and stakeholder expectations—indicating that replication is operationally proven but not trivial

- Replication requires structured localization, not model retraining in the abstract

- Court-specific rules need to be encoded such as Filing scrutiny checklists, Court fee schedules, Jurisdictional requirements, Defect typologies and cure workflows

- Alignment with local cause-listing, roster allocation, and lifecycle management practices is required

- Support for local languages and scripts used in filings, orders, and dictation must be provided

- Region-specific document formats, stamps, seals, and legacy scanning practices need to be handled

- Custom KPI frameworks must be aligned with the court's priorities (speed, accuracy, adoption, neutrality)

- Human-in-the-loop validation standards should reflect judicial comfort levels with AI assistance

- Clear guardrails must exclude outcome prediction and preserve judicial discretion

- Hands-on training programs for judges, registries, and clerks are essential

- Gradual workflow augmentation should be implemented rather than forced replacement of existing processes

- Continuous Monitoring, Learning, and Evaluation (MLE) cycles must be maintained to build institutional trust

Supporting Materials

* The data presented is self-reported by the respective organisations. Readers should consult the original sources for further details.